LLM Reasoning

Chain-of-Thought

Step-by-step reasoning that prompts LLMs to articulate intermediate reasoning steps before arriving at a final answer.

Tool Use

Extending LLM capabilities with external tools such as calculators, search engines, and code interpreters.

ReAct

Interleaving reasoning traces and actions, enabling LLMs to dynamically plan and interact with environments.

Lab

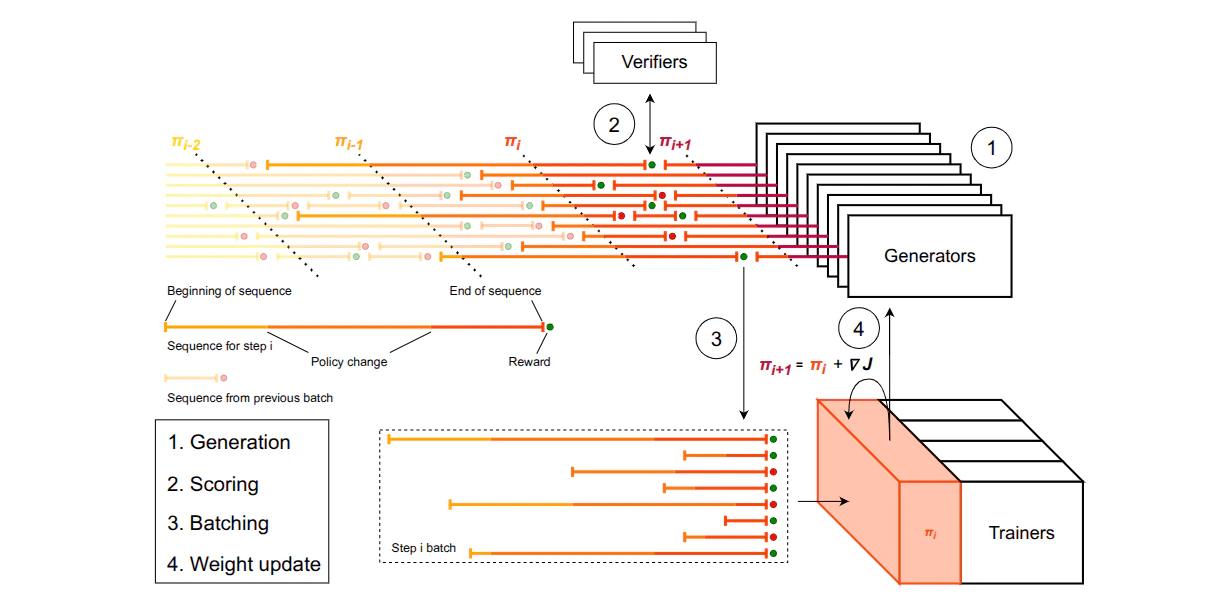

RL for Reasoning in Small LLMs

AAAI 2026 — Fine-tune DeepSeek-R1-Distill-Qwen-1.5B with GRPO on a compact math dataset. AMC23 accuracy improves from 63% to 80%; AIME24 reaches 46.7%, surpassing o1-preview. Full training run costs ~$42 on 4× A40 GPUs.

VLM Reasoning

VLM Reasoning

Visual chain-of-thought and grounded tool use for vision-language models.

Further reading

- Hugging Face — LLM Course, Chapter 12: Reasoning