Introduction

In probabilistic graphical modeling we saw the necessity of latent representations. In this section we advance the topic to the case where we need to learn features that are suitable for generating data from a complex data distribution. After reviewing the premise of generative modeling via what we will call deep latent variable models, we will look at the problem of inference of the latent space that is suitable for generating such data. We will see that this problem is intractable and we will introduce the variational inference method that will allow us to approximate the posterior distribution of the latent space. The method that we will dive into is called Variational Autoencoder (VAE) that is used across various domains, including collaborative filtering, image compression, reinforcement learning, and generation of content such as music and images. VAEs can also serve as the base to understand advanced topics such as Stable Diffusion Models (SDMs) that are used in the generation of images and videos. We will focus on continuous VAEs in this edition of the course.Calculus of Variations

One of the main methods of generative approximate inference is variational inference that originates from calculus of variations. To understand how this calculus differs from the one we are used to, consider how the standard calculus using the derivative of a function is able to optimize it and calculate its minimum. In a similar way, in calculus of variations we are able to optimize a functional. A functional in mathematics is a mapping from a function space to the real numbers - just like the function is a mapping from a real number (the argument) to a real value (the result of the function evaluation). The concept of a functional can be illustrated with the definition of entropy, in the context of information theory. Shannon entropy, denoted as , is a measure of the uncertainty or randomness in a probability distribution. It’s defined for a discrete probability distribution , where is the probability of the -th outcome. The entropy is defined as: Here, is a functional because it maps the function (in this case, the probability distribution function ) to a real number, which represents the entropy of . The calculus of variations is concerned with finding the function that maximizes a functional. In the case of entropy, the function that maximizes the entropy is the uniform distribution, where all the outcomes have the same probability. This is intuitive, because if all the outcomes have the same probability, then we have the most uncertainty about the outcome.The Variational Generative Modeling Problem

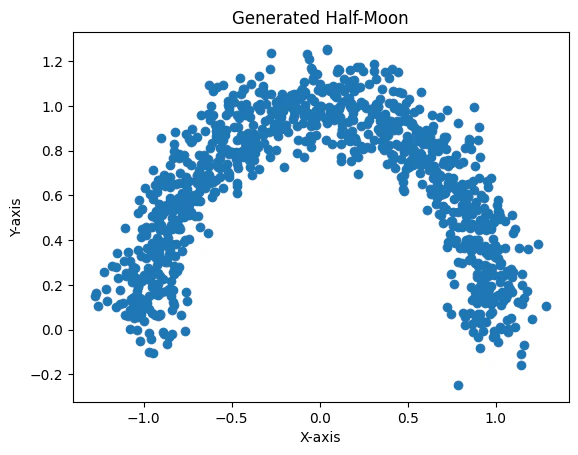

VAE is one of the answers to the aforementioned variational optimization problem. In this approach, we use a neural network to create the right structure instead of explicitly encoding it in the prior. This is not a new idea - sampling from a complex probability distribution can be achieved by feeding samples from a simple distribution to a suitably chosen function. In the example below, a function is used to change the mean of the standard normal distribution to generate a half-moon shape.

The Optimization Problem and Objective

However there is a problem with this generator. Even with neural networks “designing” the right features in the latent space 1, we are still facing a very heavy computational problem in trying to estimate the marginal distribution . To understand why, consider the MNIST dataset and the problem of generating handwritten digits that look like that. We can sample from generating a large number of samples , and with the help of the neural network sample and compute . The problem is that we need a very large number of such samples in high dimensional spaces such as images (for MNIST this is 28×28 dimensions). Most of the samples will result into negligible and therefore won’t contribute to the estimate of the . See the mnist-manifold notebook for details. This is the problem that VAE addresses. The key idea behind its design is that of inference of the right latent space such that when is sampled, it results into a that is very similar to that of our training data. VAE in other words buys us sample efficiency allowing computation and optimization of the objective function with far less effort than before.References

[1]: Diederik Kingma and Max Welling. Auto-Encoding Variational Bayes. In International Conference on Learning Representations, 2014, https://arxiv.org/abs/1312.6114 [2]: Yuri Burda, Roger Grosse, Ruslan Salakhutdinov. Importance Weighted Autoencoders. In International Conference on Learning Representations, 2015, https://arxiv.org/abs/1509.00519 [3]: Diederik P. Kingma, Max Welling. An Introduction to Variational Autoencoders. Foundations and Trends in Machine Learning, Vol. 12, No. 4, pp. 307–392, 2019, https://arxiv.org/abs/1906.02691Footnotes

- Note that the features that the neural network captures are not interpretable as the intuitively understood features that humans consider. For the MNIST dataset for example, humans will consider the slant of each digit, thinner strokes etc. ↩