Tokenizer types

Lets review the main tokenizers so we highlight such connection for each type specifically.Word level tokenization

The word-level tokenizers are intuitive, however, they suffer from the problem of unknown words, tagged as Out Of Vocabulary (OOV) words. They also tend to result into large vocabulary sizes, assigning different tokens to “can’t” and “cannot”. They also have issues with abbreviations eg. “U.S.A”.Character level tokenization

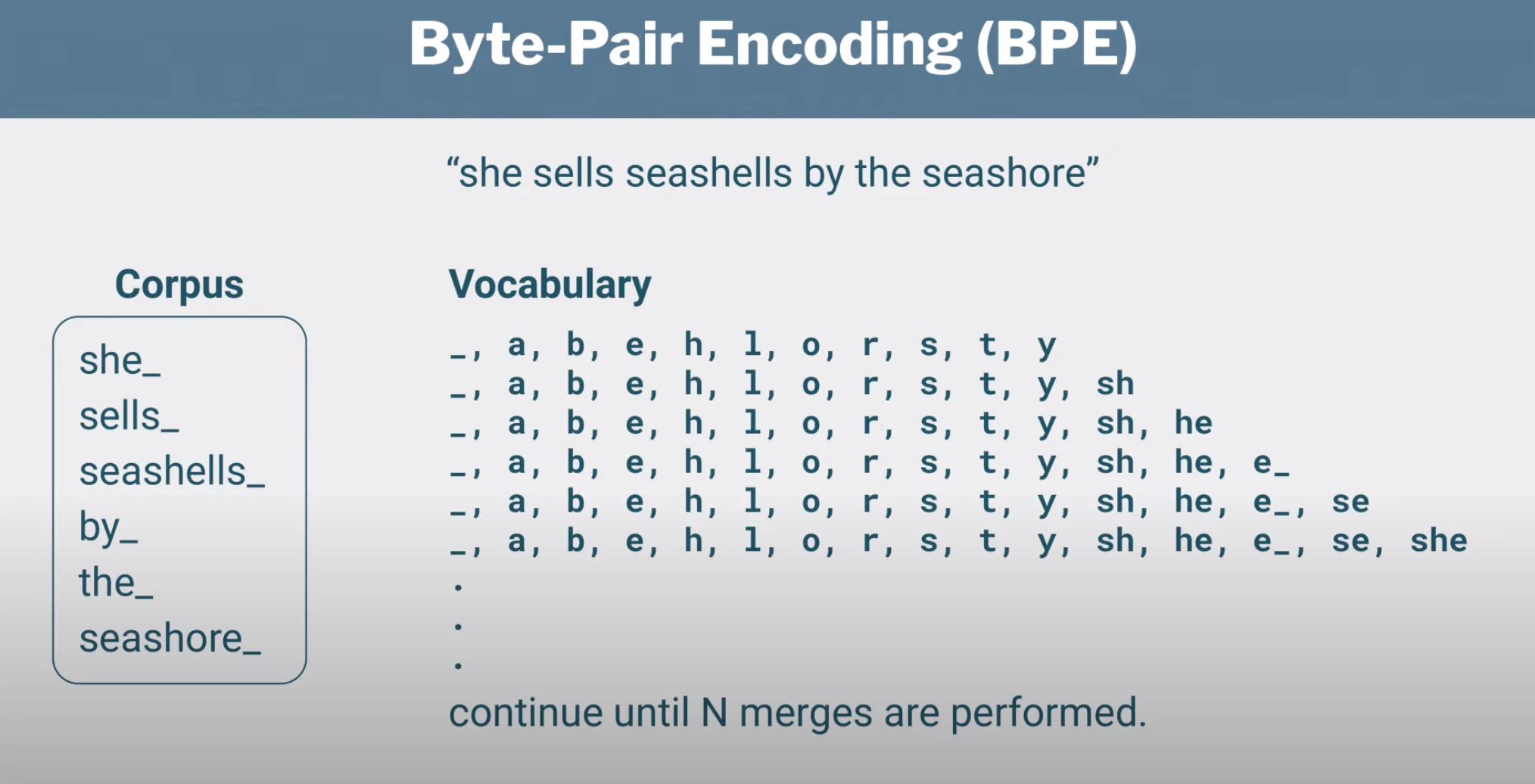

Character-level tokenization are more flexible as they bypass the OOV issue, however to capture the context of each word we need to use much longer sequences and this results in loss of performance.Byte Pair Encoding (BPE)

In BPE, one token can correspond to a character, an entire word or more, or anything in between and on average a token corresponds to 0.7 words. The idea behind BPE is to tokenize at word level frequently occuring words and at subword level the rarer words. GPT-3 uses a variant of BPE.

Ġ please note that this character is what the encoder produces for the space character. The encoder code doesn’t like spaces, so they replace spaces and other whitespace characters with other unicode bytes. The encoder more specifically takes all control and whitespace characters in code points 0-255 and shifts them up by 256 to make them printable. So space (code point 32) becomes Ġ (code point 288).

In the following we have another subword tokenization from the BERT tokenizer known as WordPiece.

We are going to test two tokenizers

cl100k_base that has a 100,000 vocabulary size and gpt2 that has approximately a 50,000 vocabulary size. The first one is used by the GPT-3.5 and GPT-4 models, while the second one is used by the GPT-2 model.

Select gpt2 from the list of tokenizers and observe the number of tokens produced. For the code above, this would be 186 tokens. The same text would result in 149 tokens with the cl100k_base tokenizer. Why is that and what is the impact of this on the model complexity?

-

gpt2needs more tokens to express the same words due to its less expressive vocabulary. This is exactly the same thing that happens if you dont know a word egintricateyou will use a longer phrase to express the same thing eg. very complicated`. -

gpt2will however result into a smaller model size since the vocabulary size is smaller, as evident by the transformer architecture. On the other hand, the increased token size will result into a larger requirement for context memory. The same python code will occupy more memory in thegpt2coding agent and the agent is more likely to be slower to respond since the longer sequences result into increased attention and memory requirements and also more likely to hallucinate since the model is more likely to hallucinate when its context reaches its limits.